The definition of complexity of a system is itself complex: several authors in different historical periods in different disciplines have used different definitions. Seth Lloyd ranked in the first 90s at least 32 examples of definitions, including information (Shannon), entropy (Gibbs-Boltzmann), algorithmic complexity, self-delimiting code length, minimum length description, number of parameters, the degrees of freedom or dimensions, mutual information or channel capacity, correlation, fractal dimension, self-similarity, sophistication, size of the machine topology, difference in a subtree graph, temporal or spatial complexity of calculation, logic depth or thermodynamics, arge-scale order, self-organization, edge of chaos and others.

The following quotations (apart from the last one) come from a special issue of Science on “Complex Systems” featuring many key figures in the field (Science 2 April 1999).

1. “To us, complexity means that we have structure with variations.” (Goldenfeld and Kadanoff 1999, p. 87)

2. “In one characterization, a complex system is one whose evolution is very sensitive to initial conditions or to small perturbations, one in which the number of independent interacting components is large, or one in which there are multiple pathways by which the system can evolve. Analytical descriptions of such systems typically require nonlinear differential equations. A second characterization is more informal; that is, the system is “complicated” by some subjective judgment and is not amenable to exact description, analytical or otherwise.” (Whitesides and Ismagilov 1999, p. 89)

3. “In a general sense, the adjective “complex” describes a system or component that by design or function or both is difficult to understand and verify. [...] complexity is determined by such factors as the number of components and the intricacy of the interfaces between them, the number and intricacy of conditional branches, the degree of nesting, and the types of data structures.” (Weng et al. 1999, p. 92)

4. “Complexity theory indicates that large populations of units can selforganize into aggregations that generate pattern, store information, and engage in collective decision-making.” (Parrish and Edelstein-Keshet 1999, p. 99)

5. “Complexity in natural landform patterns is a manifestation of two key characteristics. Natural patterns form from processes that are nonlinear, those that modify the properties of the environment in which they operate or that are strongly coupled; and natural patterns form in systems that are open, driven from equilibrium by the exchange of energy, momentum, material, or information across their boundaries.” (Werner 1999, p. 102)

6. “A complex system is literally one in which there are multiple interactions between many different components.” (Rind 1999, p. 105)

7. “Common to all studies on complexity are systems with multiple elements adapting or reacting to the pattern these elements create.” (Brian Arthur 1999, p. 107)

8. “In recent years the scientific community has coined the rubric ‘complex system’ to describe phenomena, structure, aggregates, organisms, or problems that share some common theme: (i) They are inherently complicated or intricate [...]; (ii) they are rarely completely deterministic; (iii) mathematical models of the system are usually complex and involve non-linear, ill-posed, or chaotic behavior; (iv) the systems are predisposed to unexpected outcomes (so-called emergent behavior).” (Foote 2007, p. 410)

9. “Complexity starts when causality breaks down” (Editorial 2009)

The following quotations (apart from the last one) come from a special issue of Science on “Complex Systems” featuring many key figures in the field (Science 2 April 1999).

1. “To us, complexity means that we have structure with variations.” (Goldenfeld and Kadanoff 1999, p. 87)

2. “In one characterization, a complex system is one whose evolution is very sensitive to initial conditions or to small perturbations, one in which the number of independent interacting components is large, or one in which there are multiple pathways by which the system can evolve. Analytical descriptions of such systems typically require nonlinear differential equations. A second characterization is more informal; that is, the system is “complicated” by some subjective judgment and is not amenable to exact description, analytical or otherwise.” (Whitesides and Ismagilov 1999, p. 89)

3. “In a general sense, the adjective “complex” describes a system or component that by design or function or both is difficult to understand and verify. [...] complexity is determined by such factors as the number of components and the intricacy of the interfaces between them, the number and intricacy of conditional branches, the degree of nesting, and the types of data structures.” (Weng et al. 1999, p. 92)

4. “Complexity theory indicates that large populations of units can selforganize into aggregations that generate pattern, store information, and engage in collective decision-making.” (Parrish and Edelstein-Keshet 1999, p. 99)

5. “Complexity in natural landform patterns is a manifestation of two key characteristics. Natural patterns form from processes that are nonlinear, those that modify the properties of the environment in which they operate or that are strongly coupled; and natural patterns form in systems that are open, driven from equilibrium by the exchange of energy, momentum, material, or information across their boundaries.” (Werner 1999, p. 102)

6. “A complex system is literally one in which there are multiple interactions between many different components.” (Rind 1999, p. 105)

7. “Common to all studies on complexity are systems with multiple elements adapting or reacting to the pattern these elements create.” (Brian Arthur 1999, p. 107)

8. “In recent years the scientific community has coined the rubric ‘complex system’ to describe phenomena, structure, aggregates, organisms, or problems that share some common theme: (i) They are inherently complicated or intricate [...]; (ii) they are rarely completely deterministic; (iii) mathematical models of the system are usually complex and involve non-linear, ill-posed, or chaotic behavior; (iv) the systems are predisposed to unexpected outcomes (so-called emergent behavior).” (Foote 2007, p. 410)

9. “Complexity starts when causality breaks down” (Editorial 2009)

Generally, complex systems may have the following features:

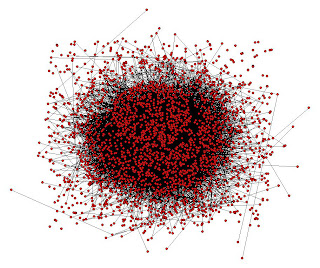

Very large number of elements and connections

Very large number of elements and connections

- For example the human brain has about 1011 - 1012 neurons, the world population is about 1010 people, in a laser are involved about 1018 atoms, in a fluid there are about 1023 molecules for cubic. The elements connections or relationships increase exponentially with the number of elements, for example in the human brain supposed that every neuron is connected with about 1000 dendrites to other neurons one gets a number of about 1014 - 1015 connections.

- Difficult to determine boundaries

- It can be difficult to determine the boundaries of a complex system. The decision is ultimately made always by the observer, and is therefore always subjective, depending from the paradigms and the epistemology of the observer.

- May be open

- Complex systems are usually open systems

- May have a memory

- The history of a complex system may be important since they are dynamical systems which change over time, and prior internal states may have an influence on present states, for example they can exhibit hysteresis.

- May be nested

- The components of a complex system may themselves be complex systems. For example, an economical system is made up of organisations, which are made up of people, which are made up of cells - all of which are complex systems.

- Different structures of the internal dynamic networks

- The dynamic networks of internal and external interconnections may have different topologies at small and large scale. In the human cortex for example, we see dense local connectivity and a few very long axon projections between regions inside the cortex and to other brain regions.

- May produce emergent phenomena

- Complex systems may exhibit behaviors that are emergent, which is to say that while the results may be deterministic, they may have properties that can only be studied at a higher level. For example, the termites in a mound have physiology, biochemistry and biological development that are at one level of analysis, while their social behavior is a property that emerges from the collection of termites and needs to be analysed at a different level. The most relevant emergent phenomena is, of course, life.

- Relationships are non-linear

- Since the system internal processes and/or the relationship between inputs and outputs this means that a small internal or input perturbation may cause several types of effects, such a large one (like the butterfly effect), a proportional or even no effect at all. In linear systems the effect is always directly proportional to caus.

- Relationships contain feedback loops

- Both negative (damping and stabilization) and positive (amplifying) feedback loops are always found in complex systems. The effects of an element's behaviour are fed back to in such a way that the element itself is altered. Generally negative loops are essential for system stabilization and for its homeostasis, that is by its very existence.

Commonly are two - among the many possible - the papers that are listed as keyworks to the beginning of the definition and method for a science of complexity.

The first is Science and Complexity by Warren Weaver of 1947-48. In this work, Weaver clearly outlines the scope of complexity by splitting scientific problems into three categories:

- problems of simplicity

are those which Weaver defines as two-variables problems. The typical example is a billiard table with two balls; classical mechanics, given the initial conditions, is able to predict with absolute precision the position and speed of the balls after the stroke.

- problems of disorganized complexity

- problems of organized complexity

Weaver points out that these problems are precisely those most vital:

"Science has, to date, succeded in solving a bewildering number of relatively easy problems, whereas the hard problems, and the ones which perhaps promise most for man's future, lie ahead."

"What makes an evening primrose open when it does? Why does salt water fail to satisfy thirst? Why is one chemical substance a poison when another, whose molecules have just the same atoms but assembled into a mirror-image pattern, is completely harmless? Why does the amount of manganese in the diet affect the maternal instinct of an animal? What is the description of aging in biochemical terms? ... All these are certainly complex problems, but they are not problems of disorganized, to which statistical methods hold the key. They are all problems which involve dealing simultaneously with a sizable number of factors which are interrelated into an organic whole. They are all, in the language here proposed, problems of organized complexity"

"What makes an evening primrose open when it does? Why does salt water fail to satisfy thirst? Why is one chemical substance a poison when another, whose molecules have just the same atoms but assembled into a mirror-image pattern, is completely harmless? Why does the amount of manganese in the diet affect the maternal instinct of an animal? What is the description of aging in biochemical terms? ... All these are certainly complex problems, but they are not problems of disorganized, to which statistical methods hold the key. They are all problems which involve dealing simultaneously with a sizable number of factors which are interrelated into an organic whole. They are all, in the language here proposed, problems of organized complexity"

The birth of modern science in the seventeenth century is identified with a drastic choice, and initially successful: to give up studying nature as an organic whole and focus on simple, quantifiable phenomena, isolating them from the rest. It is otherwise said, taking apart a complex mechanism and reducing it in many places, small enough to be well understood in their evolutionary processes and described by simple mathematical laws.

This methodological approach, which goes by the name of reductionism, is the source of the most impressive progress in the history of knowledge of nature: in little more than two centuries have discovered the laws of gravity that govern the motion of the planets, the laws of thermodynamics, which allowed the construction of the internal combustion engine, the equations of electromagnetism underlying electrical engineering, optics and modern telecommunications, and finally - at the beginning of the next century - relativity and quantum physics, which reveals the deep behavior of matter, but also the history of universe from big bang to today.

These successes, almost unbelievable, however took to distort not little the cognitive perspective: the increasing tendency was to consider as important and fundamental only the simple parts derived from the division, ignoring the complex system from which they were isolated.

From winning methodology the reductionism approach was gradually transformeing into a impoverished vision of nature, in a sort of undeclared philosophy of knowledge that in some extreme cases, such as string theory, with some almost mystical shade: if only the elementary part is fundamental, everything that comes from the cooperation of many parties has little scientific dignity. And, of course, once a part considered elementary is, in turn, decomposed, it also lose the "dignity" in favor of its components.

This methodological approach, which goes by the name of reductionism, is the source of the most impressive progress in the history of knowledge of nature: in little more than two centuries have discovered the laws of gravity that govern the motion of the planets, the laws of thermodynamics, which allowed the construction of the internal combustion engine, the equations of electromagnetism underlying electrical engineering, optics and modern telecommunications, and finally - at the beginning of the next century - relativity and quantum physics, which reveals the deep behavior of matter, but also the history of universe from big bang to today.

These successes, almost unbelievable, however took to distort not little the cognitive perspective: the increasing tendency was to consider as important and fundamental only the simple parts derived from the division, ignoring the complex system from which they were isolated.

From winning methodology the reductionism approach was gradually transformeing into a impoverished vision of nature, in a sort of undeclared philosophy of knowledge that in some extreme cases, such as string theory, with some almost mystical shade: if only the elementary part is fundamental, everything that comes from the cooperation of many parties has little scientific dignity. And, of course, once a part considered elementary is, in turn, decomposed, it also lose the "dignity" in favor of its components.

|

| The Canard Digérateur, or Digesting Duck - 1738, Jacques de Vaucanson |

In the postwar in the United States first - but also, of course, throughout the rest of the world - physics was culturally dominated by the elementary particles scholars, who obviously considered fundamental only the ultimate components of matter, when in 1972 P. W. Anderson, that five years later won the Nobel prize for Physics for his fundamental contributions to the theoretical understanding of the electronic structure of magnetic and disordered systems, published the second article chosen here intended to make history, entitled "More is different".

In his article Anderson argued absolutely innovative thesis, but that can be however summarized in a few sentences, so significant as revolutionary:

"The ability to reduce everything to simple fundamental laws does not imply the ability to start from those laws and reconstruct the universe"

"The constructionist hypothesis breaks down when confronted with the twin difficulties of scale and complexity. The behavior of large and complex aggregates of elementary particles, it turns out, is not to be understood in terms of a simple extrapolation of the properties of a few particles. Instead, at each level of complexity entirely new properties appear"

"The whole becomes not only more but also very different than the sum of its parts".

The various levels of nature corresponding to the different scales of observation are so only partially related to each other and the more are distant, the more we can consider them as independent."The constructionist hypothesis breaks down when confronted with the twin difficulties of scale and complexity. The behavior of large and complex aggregates of elementary particles, it turns out, is not to be understood in terms of a simple extrapolation of the properties of a few particles. Instead, at each level of complexity entirely new properties appear"

"The whole becomes not only more but also very different than the sum of its parts".

On this basis, Anderson completes the manifesto of anti-reductionism proposing a new scheme of the hierarchical organization of sciences that is still today a key reference for the classification of the sciences of complexity; the key to understanding is:

"The elementary entities of science X obey the laws of science Y. But this hierarchy does not imply that science X is just apply Y".

In fact, there's more:

"We expect to meet very basic issues every time we compose the parts to form a more complex system and try to understand the substantially new behaviors that result"

(freely adapted from the presentation by Prof. Mario Rasetti "Theory of Complexity", 2008)

Anderson emphasizes that the failure of reductionist hypothesis does not imply the success of a "constructionist":

"In fact, the more the elementary particle physicists tell us about the nature of the fundamental laws, the less relevance they seem to have to the very real problems of the rest of the science, much less to those of society."

The work of Anderson is a fierce criticism against both the classical reductionism and the constructionism. At the end of his article he gives an ironic example, associated to a famous dialogue between Francis Scott Fitzgerald and Ernest Hemingway in Paris in the 1920's:

FITZGERALD (constructionist idealist): The rich are different from us.

HEMINGWAY (radical reductionist): Yes, they have more money.

These two works therefore define the space in which the science of complexity operates, that rather than act into science acts around and between sciences, and the need for new methodologies beyond reductionism and constructionism - that is to dismantle or reassemble the system losing the properties specifically complex - that can consider the complex system as an organic whole.

|

| the Beauty of Complexity |

The study of complexity is typically interdisciplinary, or perhaps multi-disciplinary, and some possible applications, examples of models and authors in various fields are:

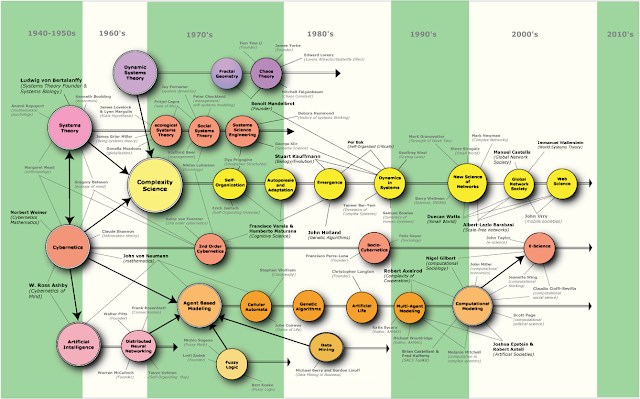

From the three main groups of Systems Theory, Cybernetics and Artificial Intelligence (or, more specifically, Cybernetics of Mental Systems) of the 40-50 years, branch several lines of study: the union of the first two leads to what is defined starting from the 60-70 years the Science of Complexity, particularly in the 70s Henri Atlan and others recognize that self-organization is nothing more than an emerging phenomenon that occurs when a complex system is in equilibrium in a delicate situatuation called "chaos edge": so the focus shifts from self-organization to complex systems at the edge of chaos and, since 1978, the term "complexity theory" begins to replace "cybernetics"».

- Physics: formation of spatio-temporal patterns in lasers, nonlinear optics, hydrodynamics, plasmas, geophysics, local and global meteorology, astrophysics. Models: Synergetics. Authors: Haken.

- Chemistry: hypercycles, formation of macroscopic spatio-temporal patterns, such as in the Belousov-Zhabotinsky reaction, dissipative patterns, irreversible process. Models: hypercicle theory, dissipative systems theory. Authors: Eigen, Prigogine.

- Biology: biophysics, cybernetic biology, models of evolution and development, evolution of biomolecules (Eigen-Schuster model), morphogenesis (e.g. Gierer-Meinhardt model), growth of plants and animals, modelling of autopoiesis and self-organizing structures like functional models of DNA, cells, organisms, brain, evolutionary biology. Models: autopoiesis. Autori: Atlan, McCulloch, Breitenberg, Maturana, Varela, Gould, Kauffman, Bar-Yam.

- Medicine: brain activities, heart beat, blood circulation. Authors: Breitenberg, Pribram

- Neuroscience: brain activity patterns, mechanisms of vision. Authors: Pribram, Maturana, Varela.

- Cognitive Science: pattern recognition, motor control, switching among coordination states (e.g. Haken-Kelso-Bunz Model), neurophenomenology, Santiago theory of Cognition. Authors: Maturana, Varela.

- Computers: self-organization, synergetic computers, attractor networks, parallel computers.

- Psychology: psycho-physics, psycho-therapy. Models: systemic therapy, family systems therapy, Bateson project on human communication and interaction, Neuro-linguistic programming (NLP). Authors: Bateson, Laing, Milan school, Bandler, Grinder.

- Sociology: dynamics of groups, systemic theory of society and relationships among societies, socio-cybernetics, collective formation of order parameters governing human behaviour including formation of public opinion. Authors: Morin, Gallino, Parra Luna, Bar-Yam.

- Economy: Schumpeter cycles, economical models of companies, organizations, local and global economiesi, synergy effects. Authors: Gabaix

- Political Sciences: politics modelling. Authors: Pasquino.

- Ecology and Ecosystems: competition between species, impact of climate, development of specific ecosystems, Gaia hypothesis. Authors: Lovelock, Margulis.

- Philosopghy: the concept of self-organization, strong vs. weak emergence,

- Epistemology: establishment of paradigms in the sense of Kuhn, epistemology of complexity. Authors: Bateson, Morin, von Glasersfeld, von Foerster, Stengers, Atlan, Bocchi, Ceruti, László.

- Metaphysics: metaphysics of Quality. Authors: Pirsig.

- Control theory: indirect control via control parameters

- Theory of networks: activity patterns, stability, dynamics. Authors: Barabási, Cactus Group, Caldarelli.

- Virtual Networks and Communities: social and information networks, information diffusion. Authors: Ladamic.

- Linguistics: origin of meaning.

- Information theory: compression and inflation of information, change of information in self-organization processes,

- Artificial Intelligence: distributed neural networks, cellulari automata fuzzy logic, computational models, mind models. Authors: McCulloch, Minsky, Hofstadter.

- Management theory: indirect control of processes, corporate identity, learning organizations, system analisys of organizations. Authors: Senge.

A possible historical map of the various evolution lines and development of studies on the complexity is:

From the three main groups of Systems Theory, Cybernetics and Artificial Intelligence (or, more specifically, Cybernetics of Mental Systems) of the 40-50 years, branch several lines of study: the union of the first two leads to what is defined starting from the 60-70 years the Science of Complexity, particularly in the 70s Henri Atlan and others recognize that self-organization is nothing more than an emerging phenomenon that occurs when a complex system is in equilibrium in a delicate situatuation called "chaos edge": so the focus shifts from self-organization to complex systems at the edge of chaos and, since 1978, the term "complexity theory" begins to replace "cybernetics"».

This is great! Thanks for posting this masterpiece. It stimulated my curiosity, so many other prospective branches of study. So many new hours of mental endeavor :3

ReplyDeleteThanks a lot, very kind.

ReplyDeleteenjoyed this visit - thank you!

ReplyDeleteThanks to you. This appreciation said by a man of the word is really satisfactory...

Delete